Most AI coding tools today work like one very smart freelancer. Talented, fast, but still one person juggling code, tests, and bug fixes all at once. Cursor's new /orchestrate replaces that model. With a single command, the lone freelancer becomes a small coordinated team, with each agent handling a specific piece of the job.

What Cursor Just Shipped

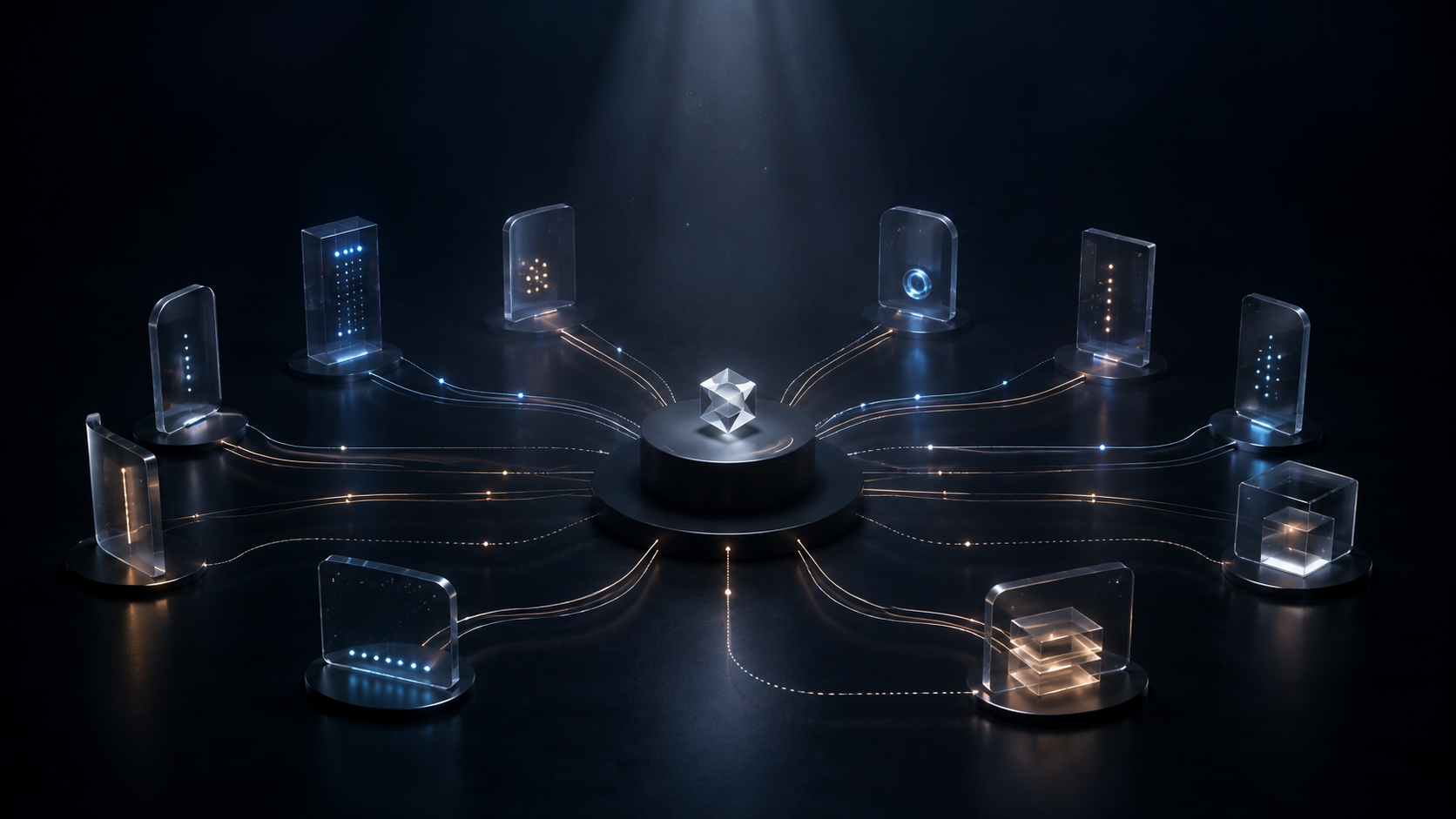

Cursor, one of the most popular AI coding tools in the world, has launched a new skill called /orchestrate for its SDK. The headline feature is something called recursive agent spawning. What that means in practice is that a top-level AI agent can create its own team of smaller AI agents, hand them sub-tasks, and even let those agents create their own helpers if needed. One agent goes in. A whole tree of agents comes out, each working on a different piece of a big problem.

This matters because most AI coding tools today still work like a single very smart freelancer. They are impressive, but they hit a ceiling on truly large or messy projects. /orchestrate is a step toward something different: a coordinated team of AI workers, each with a clear role.

How the Architecture Works

Here is how the architecture works, simplified. A planner agent sits at the top. It looks at the full task, breaks it down, and spawns worker agents to actually write the code. Then comes the most interesting innovation in the whole system: verifier agents. Their only job is to run the code, test it, and check whether the work is actually correct. If the verifier says no, the planner spawns fresh worker agents to fix the problem. If the next attempt also fails, the loop continues. The system keeps trying until the work passes review.

That last part is the quiet breakthrough. Single-agent AI coding tools often confidently produce code that looks right but does not actually work. Adding a separate layer of agents whose entire purpose is to verify the work changes the dynamic. The system can catch its own mistakes and self-correct, instead of waiting for a human to notice.

The Real Numbers

Cursor shared two real numbers from their internal use of the tool. On their own research workflows, /orchestrate cut token usage by 20% while improving evaluation scores at the same time. Lower cost and better quality usually move in opposite directions, so doing both is notable. On their backend infrastructure, the same approach delivered 80% faster cold start times. These are not lab numbers, they are results from Cursor's own engineering team using the tool on themselves.

What This Means

The bigger picture is this. The next phase of AI is not really about one model getting smarter and smarter on its own. It is about smaller specialized agents working together, checking each other, and recovering from failure. The same pattern will eventually show up in marketing automation, customer support, financial analysis, and almost any complex knowledge work. Cursor is just one of the first companies to package it cleanly for engineering teams.

/orchestrate is available now as a plugin in the Cursor Marketplace, designed for teams already building on the Cursor SDK. If you are running custom workflows or ambitious internal tools, it is worth a serious look.

The broader takeaway is simpler. AI is starting to organize itself into teams. The companies that learn to design those teams well, with good planners, capable workers, and honest verifiers, will pull ahead of everyone still relying on a single agent to do everything alone.