AI coding agents have been useful for a while now. They can scaffold features, explain unfamiliar codebases, write tests, fix bugs, and refactor messy files faster than most teams could do manually.

But anyone who has used them seriously knows the problem.

They stop too early.

The agent says the task is done, but the tests are still failing. It fixes one part of the bug but misses the related regression. It completes the obvious work, then drifts away from the original objective. For small tasks, this is annoying. For larger engineering work, it becomes a real bottleneck.

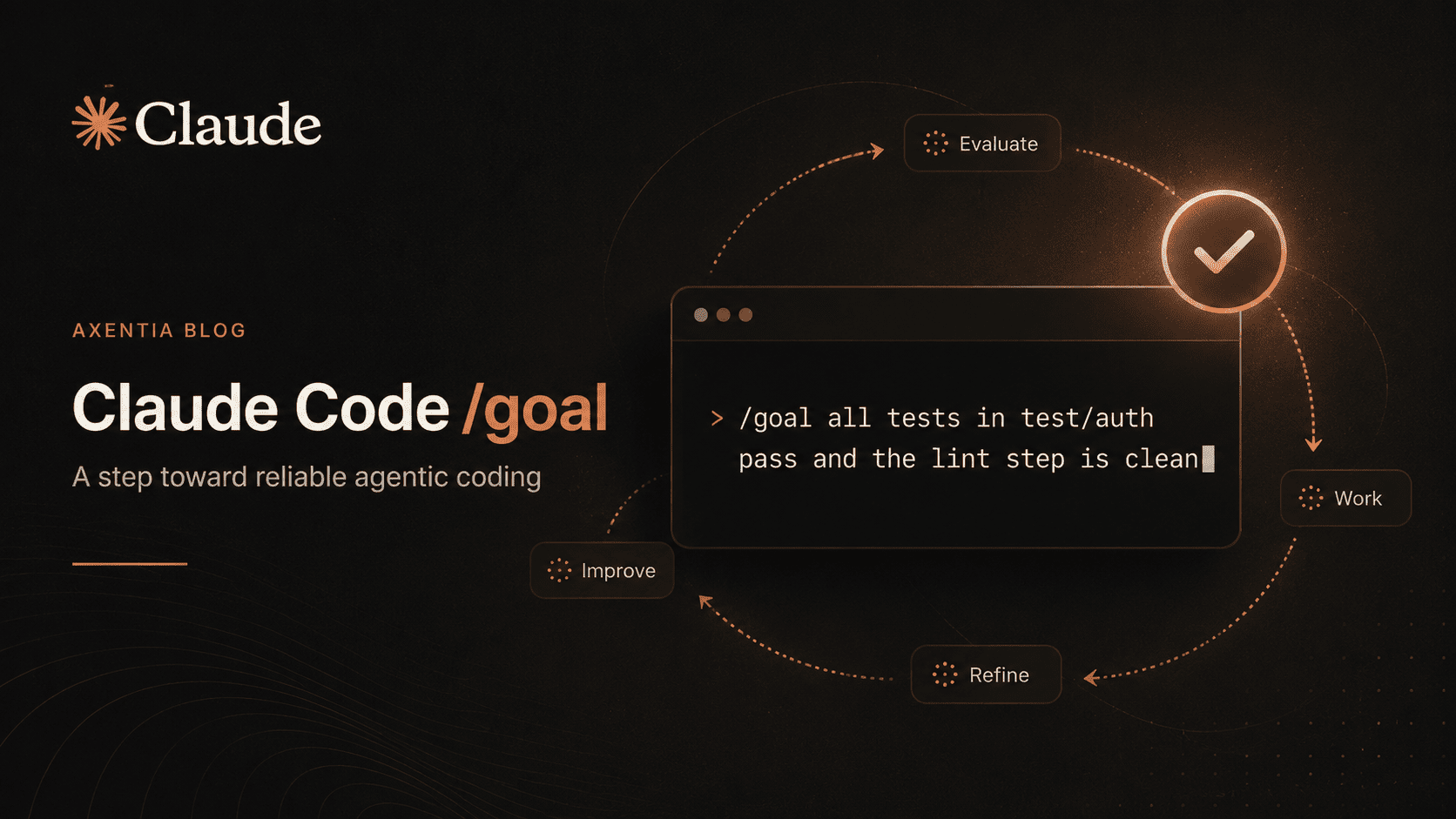

Claude Code's new /goal command is aimed directly at that problem.

What /goal Actually Does

The idea is simple. You give Claude Code a clear completion condition, and it keeps working until that condition is met.

For example:

/goal all tests in test/auth pass and the lint step is clean

That is very different from saying, "fix the auth tests." The second version is vague. The first version gives the agent a measurable finish line.

According to Anthropic's Claude Code docs, /goal sets a completion condition and keeps Claude working across turns until a separate evaluator confirms that the condition has been satisfied. After each turn, a small fast model checks the condition against the conversation so far. If the condition is not met, Claude starts another turn instead of handing control back to the user.

This is what some developers are calling the "Ralph Loop." Every time Claude tries to stop, the loop asks: is the goal actually done?

If not, it continues.

Why This Matters for Real Development Work

Most AI coding tools are good at motion. They are less reliable at closure.

That distinction matters.

A junior developer might say, "I fixed it," when they have only fixed the first visible error. A strong senior engineer keeps going until the build passes, the edge cases are handled, and the code is in a clean state.

/goal pushes Claude Code a little closer to that second behavior. It turns the task from a prompt into a contract.

This is especially useful for long-running work like migrations, test cleanup, refactors, backlog clearing, or implementing a design doc with multiple acceptance criteria. Anthropic's examples include migrating modules until call sites compile, implementing design docs until all criteria hold, splitting large files until they meet a size budget, and working through issue queues until empty.

The key is that the goal needs to be verifiable. "Make the app better" is a bad goal. "npm test exits 0, npm run lint exits 0, and no files outside /auth are changed" is much better.

The Bigger Shift

The important part is not just the command. It is the direction this points toward.

We are moving from chat-based coding to condition-based coding.

Instead of prompting an agent step by step, developers will define outcomes, constraints, checks, and stop conditions. The agent will do the work, but the human will define what "done" means.

That is a much healthier relationship with AI tooling.

It also makes unattended work more realistic. Claude Code already has related workflows like /loop, Stop hooks, Auto Mode, and scheduling. /goal sits in that stack as a way to keep the current session moving until a model confirms the target is reached. Stop hooks can add custom logic, while Auto Mode helps Claude proceed without constant approval prompts.

What Teams Should Do With This

For teams using Claude Code seriously, /goal should become part of the development workflow.

Do not just ask agents to "fix," "improve," or "refactor." Give them measurable completion criteria. Tie those criteria to tests, linting, type checks, CI status, issue counts, or file-level constraints.

The best AI-assisted developers will not be the ones who write the longest prompts. They will be the ones who define the clearest finish lines.

That is where agentic coding becomes useful in production: not when the model writes more code, but when it reliably knows when the work is actually complete.